Modeling a single voxel#

The voxel regression page has a worked example of applying simple regression) on a single voxel.

This page runs the same calculations, but using the General Linear Model notation and matrix calculations.

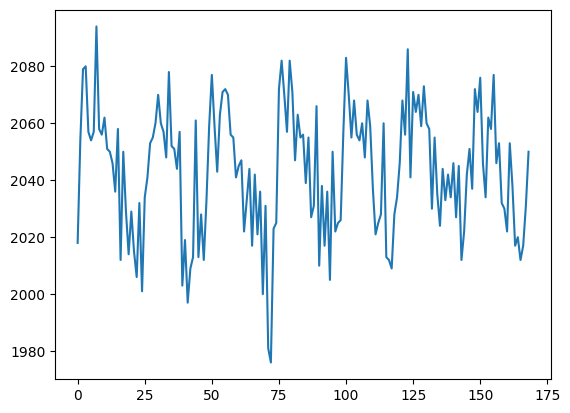

Let’s get that same voxel time course back again:

import numpy as np

import matplotlib.pyplot as plt

import nibabel as nib

# Only show 6 decimals when printing

np.set_printoptions(precision=6)

# Load the function to fetch the data file we need.

import nipraxis

# Fetch the data file.

data_fname = nipraxis.fetch_file('ds114_sub009_t2r1.nii')

img = nib.load(data_fname)

data = img.get_fdata()

# Knock off the first four volumes (to avoid artefact).

data = data[..., 4:]

# Get the voxel time course of interest.

voxel_time_course = data[42, 32, 19]

plt.plot(voxel_time_course)

[<matplotlib.lines.Line2D at 0x7f557836cbe0>]

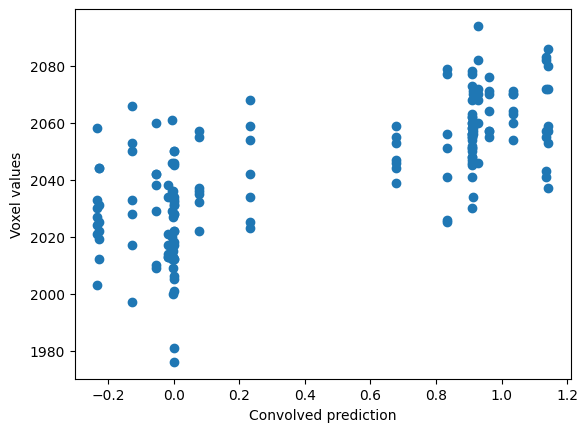

Load the convolved time course, and plot the voxel values against the convolved regressor:

tc_fname = nipraxis.fetch_file('ds114_sub009_t2r1_conv.txt')

# Show the file name of the fetched data.

convolved = np.loadtxt(tc_fname)

# Knock off first 4 elements to match data.

convolved = convolved[4:]

# Plot.

plt.scatter(convolved, voxel_time_course)

plt.xlabel('Convolved prediction')

plt.ylabel('Voxel values')

Downloading file 'ds114_sub009_t2r1_conv.txt' from 'https://raw.githubusercontent.com/nipraxis/nipraxis-data/0.5/ds114_sub009_t2r1_conv.txt' to '/home/runner/.cache/nipraxis/0.5'.

Text(0, 0.5, 'Voxel values')

As you remember, we apply the GLM by first preparing a design matrix, that has one column corresponding for each parameter in the model.

In our case we have two parameters, the slope and the intercept.

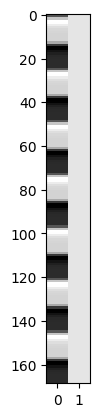

First we make our design matrix. It has a column for the convolved regressor, and a column of ones:

N = len(convolved)

X = np.ones((N, 2))

X[:, 0] = convolved

plt.imshow(X, cmap='gray', aspect=0.1, interpolation='none')

<matplotlib.image.AxesImage at 0x7f55783b6830>

\(\newcommand{\yvec}{\vec{y}}\) \(\newcommand{\xvec}{\vec{x}}\) \(\newcommand{\evec}{\vec{\varepsilon}}\) \(\newcommand{Xmat}{\boldsymbol X} \newcommand{\bvec}{\vec{\beta}}\) \(\newcommand{\bhat}{\hat{\bvec}} \newcommand{\yhat}{\hat{\yvec}}\)

Our model is:

We can get our least mean squared error (MSE) parameter estimates for \(\bvec\) with:

where \(\Xmat^+\) is the pseudoinverse of \(\Xmat\). When \(\Xmat\) is invertible, the pseudoinverse is given by:

Let’s calculate the pseudoinverse for our design:

import numpy.linalg as npl

Xp = npl.pinv(X)

Xp.shape

(2, 169)

We calculate \(\bhat\):

beta_hat = Xp @ voxel_time_course

beta_hat

array([ 31.185514, 2029.367689])

We can then calculate \(\yhat\) (also called the fitted data):

y_hat = X @ beta_hat

Finally, we may be interested to calculate the MSE of this model:

# Residuals are actual minus fitted.

e_vec = voxel_time_course - y_hat

mse = np.mean(e_vec ** 2)

mse

245.00339852605674

Notice that the \(\bhat\) parameters are the same as the slope and intercept from the Scipy calculation using linregress:

import scipy.stats as sps

sps.linregress(convolved, voxel_time_course)

LinregressResult(slope=31.18551366491453, intercept=2029.367689291584, rvalue=0.7044637722561978, pvalue=1.1832511547748845e-26, stderr=2.4312815394118448, intercept_stderr=1.634742742490283)